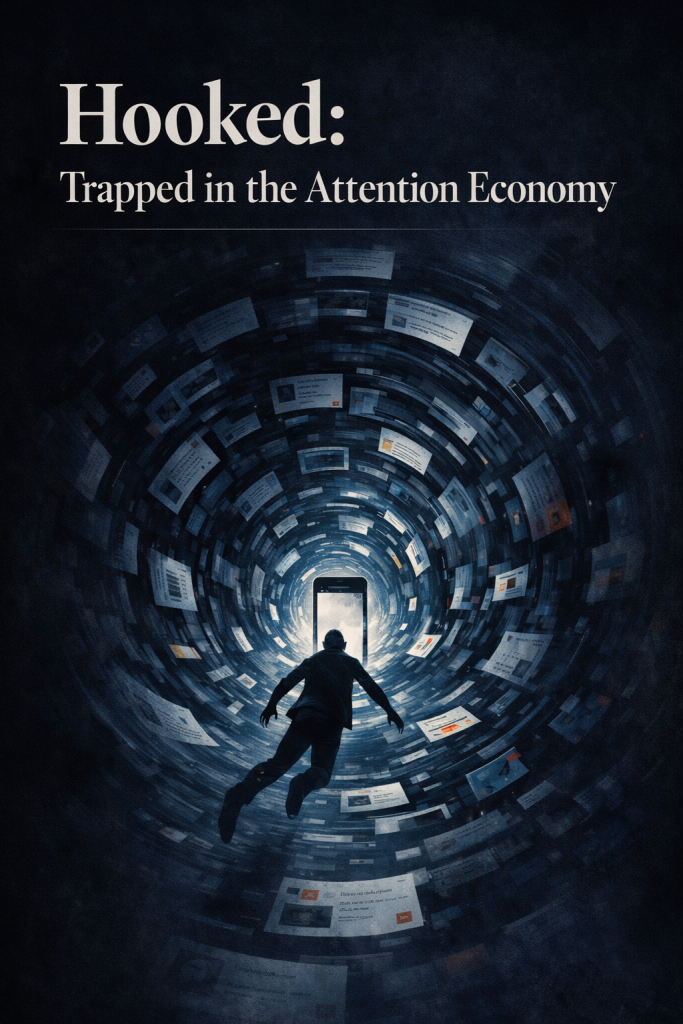

The Modern Internet Is Designed to Keep You Inside, Not Informed

The internet once promised to make us all more informed — more connected to one another and to the world beyond our immediate experience. That promise has not been broken so much as quietly redirected. The window became a mirror. The library became a casino. The question now is not whether we notice, but whether noticing is enough to change what we do next.

2.5H

AVG. DAILY SOCIAL MEDIA USE

86%

OF WATCH TIME VIA RECOMMENDATIONS

0

NATURAL STOPPING POINTS BY DESIGN

There is a moment —familiar, almost embarrassing — when you pick up your phone to check one notification and resurface forty minutes later, unsure of what you actually looked at. You didn’t plan to scroll. You didn’t decide to stay. The platform decided for you, and its toolkit is extraordinary.

The modern internet’s most powerful products were not designed to help you find what you came for. They were designed to make you forget you came for anything at all. The goal is not utility. It is occupancy — the total capture of human attention, measured in minutes and monetised in milliseconds.

“The goal is not utility. It is occupancy — the total capture of human attention, measured in minutes and monetised in milliseconds.”

Three tools that rewired how we browse

⬇

▶

◈

Each of these features was introduced as a convenience. Infinite scroll, patented by Aza Raskin in 2006, was meant to spare users the friction of clicking “next page.” Autoplay was framed as a seamless viewing experience. Recommendations, we were told, would surface content we’d love but never find. And in a narrow sense, all of this is true. But convenience has a cost that compounded quietly over decades.

Aza Raskin himself later apologised. He estimated his invention was responsible for roughly 200,000 hours of human attention consumed every day — a number that does not fill him with pride. “It’s as if,” he said, “they took behavioural cocaine and sprinkled it over the interface.” The language of addiction is not metaphor here. Platform designers borrowed directly from the literature on slot machines and variable reward schedules — the same psychology that keeps a gambler at the terminal long past rational self-interest.

How the attention economy was built, feature by feature

2006Infinite scroll is patented. The page-end as a natural stopping point is quietly abolished from the web’s most-visited properties.

2012Facebook’s News Feed algorithm shifts from chronological to engagement-ranked. The feed stops being a window onto your network and becomes a curation machine optimised for time-on-site.2015YouTube reports that its recommendation engine drives over 70% of total watch time. The front door to content is no longer search — it is the algorithm’s suggestion.2017Internal Facebook research — later made public — shows that algorithmic amplification of emotionally charged content is driving divisive behaviour. The amplification is not corrected; it is a feature, not a bug.

2020 – presentShort-form video — TikTok, then Reels, then Shorts — reduces the unit of content to under sixty seconds and the interval between dopamine hits to near-zero. The infinite scroll becomes the infinite reel.

Users rarely leave. That is the point.

Ahealthy information ecosystem would be one from which users depart enriched — with answers, with understanding, with something they can use. The platforms we inhabit optimise for the opposite: the longest possible stay, with the fewest possible exits. Links to external sources are algorithmically deprioritised. Content that provokes a reaction — outrage, anxiety, desire — is surfaced ahead of content that merely informs. The measure of success is not comprehension. It is clock time.

This matters enormously beyond personal productivity. When the mechanism by which most people encounter information is also the mechanism designed to maximise emotional arousal and minimise departure, the epistemology of entire populations shifts. It becomes structurally harder to encounter nuance, to reach closure, to be satisfied. The platform profits most from the reader who remains undecided, unsettled, and scrolling.

What we are beginning to understand

The conversation is changing. Former insiders — engineers, product managers, and executives from the biggest platforms — have spent the last several years describing the architecture of manipulation from the inside. Tristan Harris, a former Google design ethicist, has argued that the attention economy is a “race to the bottom of the brainstem,” where the winner is whoever can most reliably exploit the oldest and least rational parts of human cognition. The Centre for Humane Technology, which Harris co-founded, has shifted public discourse in meaningful ways.

Regulatory attention is also arriving, if slowly. The European Union’s Digital Services Act now requires large platforms to offer algorithmic transparency and allows users to opt out of personalisation-based feeds. Several countries are legislating minimum age requirements for social platforms. In the United States, the debate is louder than the legislation, but the legislative proposals are multiplying.

But structural change on the regulatory level moves at the speed of democratic deliberation — which is to say, slowly, and often in the wrong direction. The more important reckoning may be personal and cultural: the gradual accumulation of awareness that the device in your pocket was not designed with your interests in mind, that the feed is not a neutral surface, and that the choices it presents you with are not really yours.

“The feed is not a neutral surface. The choices it presents

you with are not really yours.”

Attention as a resource, not a given

None of this is irreversible. The web that existed before the engagement era is not gone — it is underused. RSS feeds still work. Static websites still publish. Long-form journalism, newsletters, and podcasts with fixed endpoints are thriving precisely because they promise something the algorithmic feed cannot: a beginning, a middle, and an end. People who feel most overwhelmed by the modern internet are often those who have never been shown that alternatives exist at the infrastructure level, not just the willpower level.

Choosing how you encounter information is one of the few genuine acts of autonomy available in the current digital environment. It requires some friction — the deliberate friction of choosing sources, curating feeds manually, turning off autoplay, and treating the absence of a recommendation as an invitation to decide for yourself. These are not large gestures. But in an environment engineered to eliminate precisely this kind of friction, they are meaningful ones.

The internet once promised to make us all more informed — more connected to one another and to the world beyond our immediate experience. That promise has not been broken so much as quietly redirected. The window became a mirror. The library became a casino. The question now is not whether we notice, but whether noticing is enough to change what we do next.